Claude Agent Teams Explained: AI for Complex Projects (2026 Guide)

Time min

February 27, 2026

$7.80. That’s what it cost to produce a full week of social media content — three days of LinkedIn posts, X threads, Instagram captions, video storyboards down to the second, and a sourced document with verified statistics. Fifteen minutes from prompt to deliverable. From a single prompt that spawned five AI agents, each one a specialist, each one observable in its own terminal panel.

Claude Opus 4.6 shipped Agent Teams earlier in 2026, and the timing caught the industry off-guard. Multi-agent orchestration was on every AI roadmap for this year. No one expected a production-ready version to drop this early.

Here’s what that means for you — and why the gap between “person who prompts a chatbot” and “person who manages an AI workforce” just became the most valuable skill in the room.

What Are Claude Agent Teams?

Agent Teams are a multi-agent orchestration system built into Claude Opus 4.6. Instead of one generalist AI handling an entire task, the system spawns multiple specialized agents — researcher, strategist, copywriter, reviewer — that work in parallel, share context, and coordinate their outputs through a supervisor agent. Unlike sub-agents, each teammate can be observed, redirected, and interacted with independently.

The distinction from sub-agents matters. Sub-agents operate within a single session and only pass their final results back to the main agent. You can’t see what they’re doing. You can’t intervene. You can’t ask the research sub-agent to dig deeper while the writing sub-agent keeps working.

Agent Teams break that wall. Each teammate runs in its own panel (if you use tmux), requests information from other teammates directly — the writing agent asking the research agent for more data, for instance — and a supervisor agent coordinates sequencing, prevents duplicated effort, and handles error recovery.

When we ran our social media content project, the system spawned a strategist, a copywriter, a visual concept agent, and a reviewer without us specifying all of them. On the second iteration, it added a researcher and a copy editor on its own — agents we never mentioned in the prompt.

That’s the shift. You stop being a prompt engineer. You become a project manager.

Why Agent Teams Outperform Single-Model Prompting

A single AI model spreads its context window across every dimension of a complex task — research, strategy, creation, review — and the quality of each dimension suffers. Agent Teams eliminate this tradeoff by assigning each dimension to a dedicated agent optimized for that specific function, producing output that no single-model session can match.

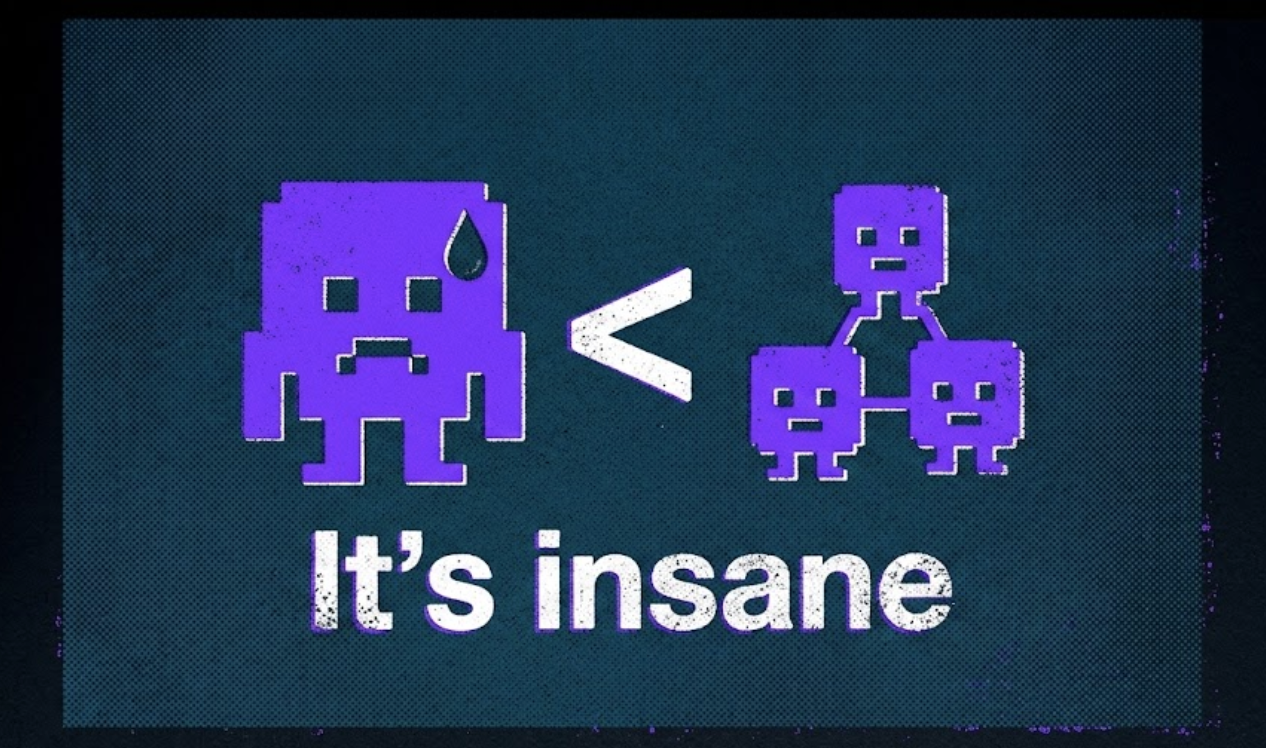

Think of it this way. Ask one person to research your audience, plan a campaign strategy, write platform-specific copy for five channels, design visual concepts, and then QA the entire package. Even a talented generalist will produce mediocre work by the third deliverable because their attention is fractured.

Now give each task to a specialist who only does that one thing. The researcher goes deep. The strategist builds on the research without the cognitive overhead of also writing copy. The reviewer catches errors that the creator, who’s been staring at the same draft for twenty minutes, would miss.

That’s the architecture. And the results bore it out in our test:

- The copywriter agent produced LinkedIn posts with metric-heavy bullet formatting, X posts trimmed to character limits with industry hashtags, and Instagram captions with 15+ relevant hashtags — each tailored to the platform’s native content style.

- The visual concept agent generated image briefs with specific color schemes, aspect ratios (1080×1080 for Instagram, banner format for LinkedIn), and 30-to-45-second video storyboards broken down frame by frame.

- The reviewer agent flagged five specific action items after the first pass — and when we fed those back to the lead agent, it delegated them across four agents working in parallel.

- The researcher agent (spawned autonomously in the second iteration) cross-referenced every statistic in the content against live web sources and replaced inaccurate numbers with verified ones. It even produced a standalone sources document.

A single Claude session doesn’t have the context window depth to maintain this level of specificity across every platform, every day, every content type. We know because we’ve tried.

See Agent Teams in Action

The full demo — from prompt to deliverable, with tmux panels showing each agent working in parallel — is worth watching in real time:

How Agent Teams Compare to Other AI Workflows

How to Set Up Claude Agent Teams: A Step-by-Step Walkthrough

Setting up Agent Teams requires three things: Claude Opus 4.6 access through a Pro ($20/month) or Max ($100–$200/month) plan, a single configuration line added to your Claude Code settings.json file, and (optionally) tmux installed for per-agent terminal panels.

Here’s the exact sequence:

Step 1: Get Claude Opus 4.6 Access

You need either the Pro plan or the Max plan. Pro works for 2–3 Agent Teams tasks per day. Max ($100/month or $200/month for extended credits) handles 8–10 complex tasks in a 5-hour window. For professional use — where you’re running Agent Teams on client work or production content — Max pays for itself by the second task.

Step 2: Enable Agent Teams in Settings

Agent Teams is an experimental feature, enabled through configuration. Open your Claude Code configuration folder, find settings.json, and add the agent teams configuration flag. The exact line is documented in the Claude Code docs.

Step 3: Install tmux (Recommended)

Without tmux, Agent Teams still work — but every agent’s output appears in a single conversation thread. With tmux, each agent gets its own panel. You can watch the researcher pulling data while the copywriter drafts content in the panel next to it. More critically, you can intervene — stop an agent going down the wrong path, add a task you forgot, or redirect focus.

Step 4: Write Your Prompt

Specify the task, the deliverables, and (optionally) the roles you want filled. In our test, we specified four roles: strategist, copywriter, visual concept agent, and reviewer. The system also spawned roles we never mentioned — a researcher and copy editor in the second pass.

One rule: reserve Agent Teams for tasks a single agent can’t handle well. A one-off email, a single blog outline, a quick code review — these don’t benefit from orchestration overhead.

When to Use Agent Teams vs. Single AI

The decision framework is straightforward: count the distinct skill sets the task requires. If it’s one or two, use a single agent. If it’s three or more, Agent Teams earn their cost.

How to Apply Agent Teams to Your Workflow Tomorrow

Start with a low-stakes project you already know the expected output for — this lets you evaluate Agent Teams’ quality against a known benchmark before handing off mission-critical work.

- Pick a repeatable multi-step task. Weekly content calendars, research briefs with QA, competitive analysis reports.

- Write your prompt like a project brief. Include deliverables, constraints, brand voice guidelines, target audience, and success criteria.

- Use tmux and watch the first run. Observe how agents divide labor, where they get stuck, and what the reviewer flags.

- Set spending alerts in your dashboard. At $7.80 per complex task on Pro, costs accumulate. Track usage for the first week and compare it against hours saved.

Want to Go Deeper? Learn Agent Engineering From Scratch

Running Agent Teams is one thing. Understanding why they work — how LLMs reason, how to architect multi-agent systems, how to build production-grade AI applications — is what separates a power user from an AI engineer.

Agent Teams are a preview of how AI work gets done from here on out. The professionals who thrive will be the ones who design the workflows, debug the orchestration, and build custom agent systems for their organizations.

If that’s the direction you want to go, Turing College’s AI Engineering program covers the full stack: LLMs, LangChain, AI agents, and hands-on projects — built for people with coding experience who want to move into AI roles in 3–4 months.

Frequently Asked Questions

How much do Claude Agent Teams cost per task?

Costs vary by complexity, but our week-long social media content project cost $7.80 — roughly 50% of the Pro plan’s single-session usage. On the Max plan ($100–$200/month), you can run 8–10 comparable tasks within a 5-hour window.

Can I control which agents are spawned?

You can specify roles in your prompt — strategist, researcher, copywriter, reviewer — and the system will spawn those agents. Claude also spawns additional agents autonomously if it determines the task requires them. You can intervene mid-task via tmux to redirect or stop individual agents.

Do Agent Teams replace prompt chaining and custom GPT workflows?

For complex, multi-step tasks, yes. Prompt chaining requires you to manually sequence each step, copy outputs between prompts, and handle errors yourself. Agent Teams automate that orchestration. For simple tasks, prompt chaining or a single agent session remains faster and cheaper.

What’s the biggest limitation of Agent Teams right now?

Cost and overkill risk. At $7.80 per complex task, using Agent Teams for work that a single agent handles well is a waste. The feature also requires manual configuration and runs smoother in the terminal with tmux than in the desktop app.